Building a ChatGPT App With the Help of ChatGPT

Thursday, 29 December, 2022

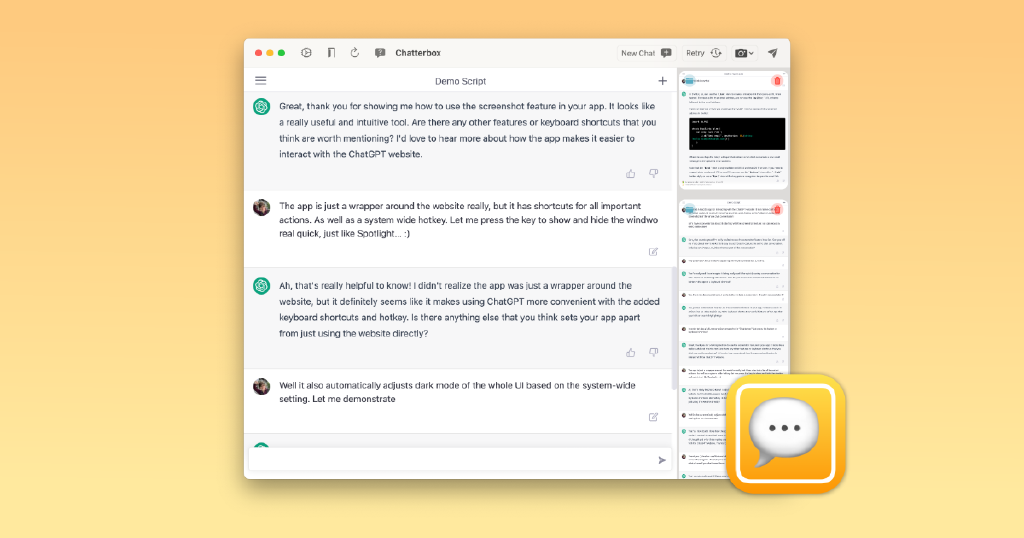

When I started prototyping Chatterbox for ChatGPT, I decided - as an experiment - to utilize ChatGPT in the process. I know, so meta, right? Here are some of my observations, thoughts and takeaways from that experiment.

Getting Started

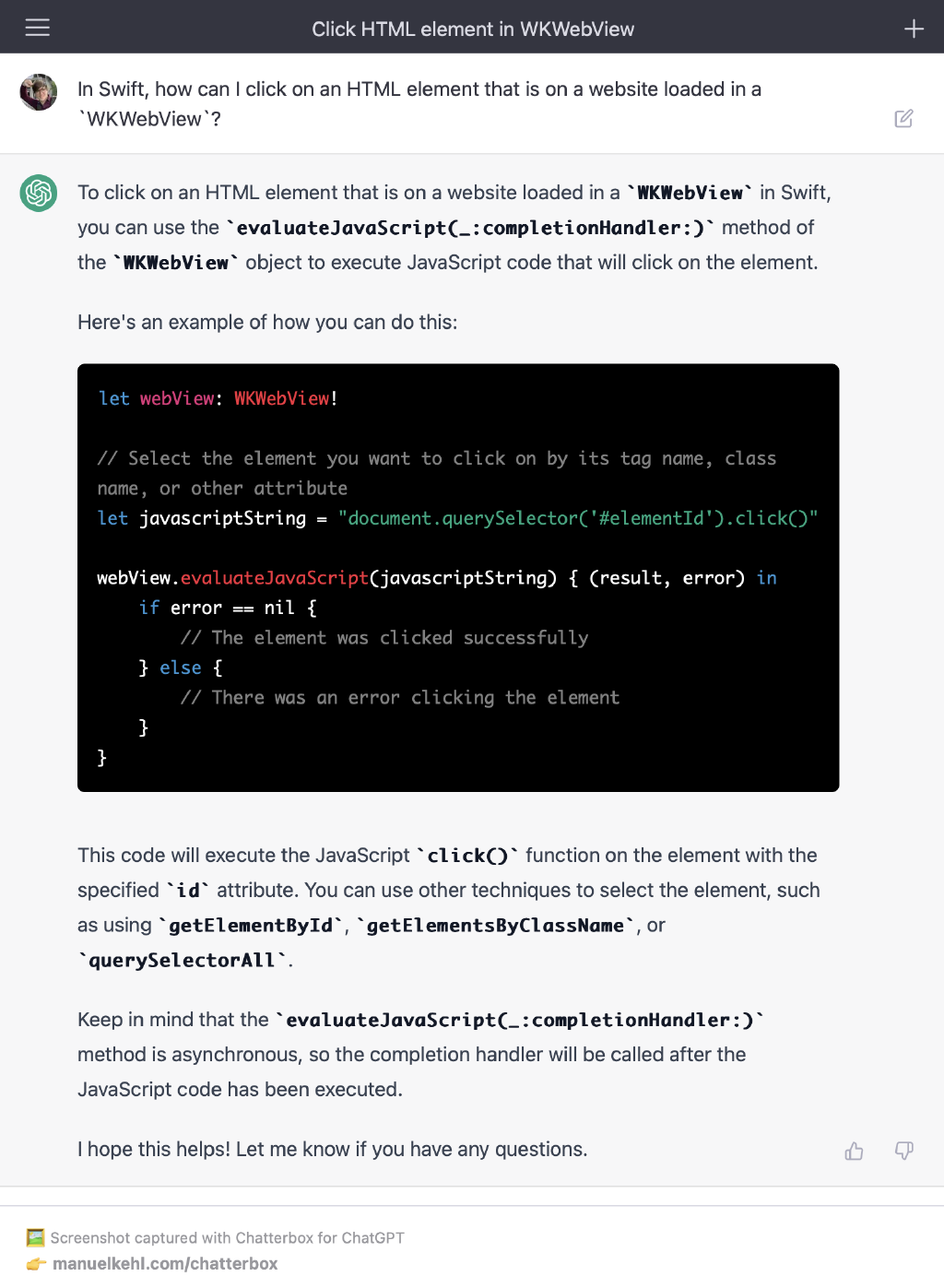

I wanted to build the native UI with SwiftUI (+AppKit where necessary) and I knew I’d want to embed the actual ChatGPT website in a WKWebView. I asked ChatGPT how to interact with HTML elements from the surrounding Swift code and learned about the evaluateJavaScript method of the WKWebView class. This allowed me to prototype the app and start experimenting with different ideas and features.

ChatGPT as a Mentor 🧑🏫

As I was building the prototype, I continued to ask ChatGPT in order to learn about topics, I have not encountered before (while most of my SwiftUI experience was transferable to macOS, I did learn quite a few new things about AppKit in the process). In a way, ChatGPT played a role in the development of Chatterbox - it helped me learn the skills and techniques I needed to bring the app to life. When it worked, it got me there faster than searching Google and/or Stackoverflow, but I will say that, quite often, it gave results that were either slightly wrong or completely wrong and made up.

The first case is not an issue (IMO) because I only consult it for pointers on “how to get started with something” rather than copying and pasting whole blocks of code verbatim, so fixing minor issues on the fly while implementing a solution is easy enough most of the time. The problematic part is when ChatGPT gives a completely false and made up answer but does it in a way that sounds fully confident and professional. On some occasions it hallucinated completely non-existent APIs (which sounded plausible enough). There’s something particularly disappointing about that experience because I always get my hopes up about this “super convenient magical API that would be a perfect fit for my problem” 🥲

ChatGPT as a Rubber Duck 🐤

Are you familiar with the term “rubber duck debugging”? If not, you should read up on it because it is an absolutely brilliant technique! While developing Chatterbox, sometimes I would ask ChatGPT for help when encountering errors or problems. While the responses where often pretty generic and not too helpful, sometimes the mere act of formulating my question and seeing it reflected in ChatGPT’s response was enough for me to realize what the problem is 💡

Will ChatGPT become my go-to rubber duck debugging companion? No, because:

- Formulating questions in writing is tedious (I enjoy typing and think I’m pretty fast too, but just “talking to myself” is quicker)

- Anika the Radar Plushy (one of my dearest “souvenirs” from my time at Apple) would be really sad to be replaced by an AI chatbot. She’s such a loyal coding companion, never complaining and always listening to me blabbering on about all the random issues I encounter during my work ❤️

Summary

Ultimately (like many of you, I’m sure), I have come to the conclusion that ChatGPT is quite impressive and can be very useful, but it is just a tool after all - and a flawed one at that. Used as a research, learning and debugging companion (while reflecting critically about its output) it can speed things up and make you more effective. But it can also be distracting and outright confusing by “teaching you” things that don’t actually exist while sounding absolutely confident, often requiring to “manually double check with Google” after all…

It has certainly earned its place in my toolset (and is always at hand with Chatterbox’s global hot key) and I’m curious to see how it develops and how I and others will continue to find creative uses for it and ways to integrate it into our workflows. Feel free to reach out if you’d like to share any cool use cases for ChatGPT that you discovered for yourself.